Emergency Safety App — Redesigning how people respond when seconds matter

The Challenge

When panic strikes, every second counts. Yet existing emergency apps were cluttered with features that became barriers during the moments that mattered most. People were freezing, fumbling, and failing to get help when they needed it desperately.

ZoneAid needed to become more than an app—it needed to be an instant lifeline that worked even when users couldn't think clearly, couldn't see properly, or couldn't use both hands.

The Solution

We stripped away everything non-essential and rebuilt the emergency experience around three core principles: speed, simplicity, and reliability. The new ZoneAid gets users connected to help in under 3 seconds, works offline, and functions even in the most chaotic situations.

Design Journey

Discover

Understanding fear and urgency

Define

Clarity from chaos

Sketch

Life-saving blueprints

IA

Emergency system backbone

Wireframe

First true rehearsal

Prototype

Testing under pressure

Deliver

Promise of response

Discover

Walking through the world of fear

The research began in hospital waiting rooms and emergency dispatch centers, but the real learning happened in the quiet moments when people shared their stories of fear.

I sat with Maria, a working mother, as she recounted the night her apartment building caught fire. "I grabbed my phone to call 911, but my hands were shaking so badly I kept dialing wrong. My daughter was crying, smoke was everywhere, and I just... froze. I couldn't think. The emergency app I had installed? It had five different buttons and I had no idea which one to press."

At the dispatch center, Lieutenant Rodriguez explained the bottlenecks they see daily: "We get calls where people are so panicked they can't speak clearly. They can't remember their address. Sometimes we get pocket dials that could have been real emergencies. The technology should be helping them communicate, not adding another layer of complexity."

During a ride-along with paramedics, I witnessed a cardiac event where bystanders struggled with an emergency app that required them to create an account before calling for help. Precious minutes ticked by as someone fumbled through registration screens while a life hung in the balance.

"In emergencies, cognitive load becomes the enemy. Fear narrows attention, stress impairs fine motor skills, and panic clouds judgment. Every extra tap, every confusing icon, every moment of hesitation could mean the difference between life and death."

The patterns became clear through dozens of interviews. People didn't need more features during emergencies—they needed fewer barriers. They didn't need options—they needed action. They didn't need complexity—they needed clarity that cut through chaos.

"We didn't need more features. We needed fewer barriers."

This insight would reshape everything that followed. The research wasn't just data points—it was a collection of human stories that demanded we design not just for normal circumstances, but for the worst moments of people's lives. It set the stage for defining what truly mattered in those critical seconds.

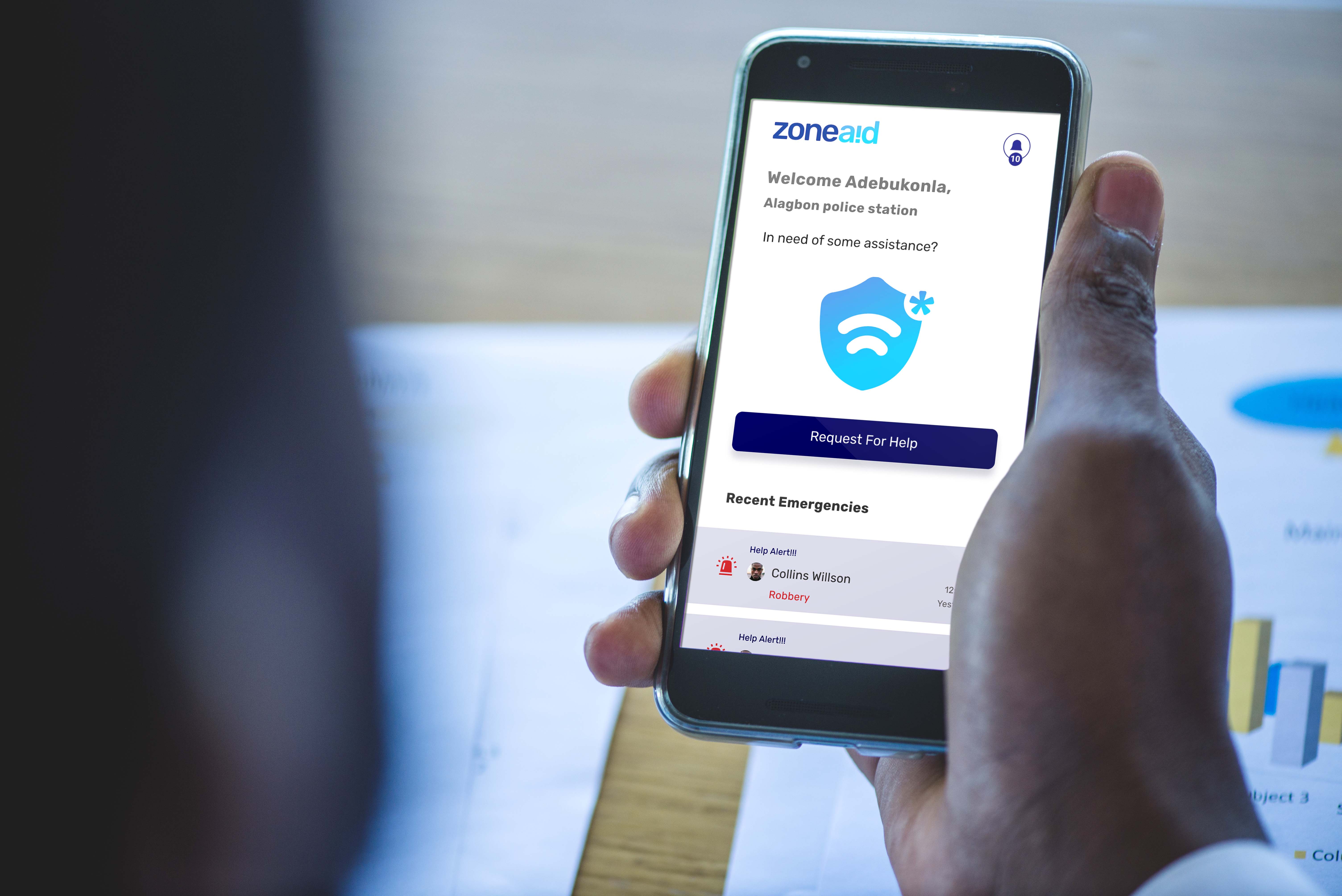

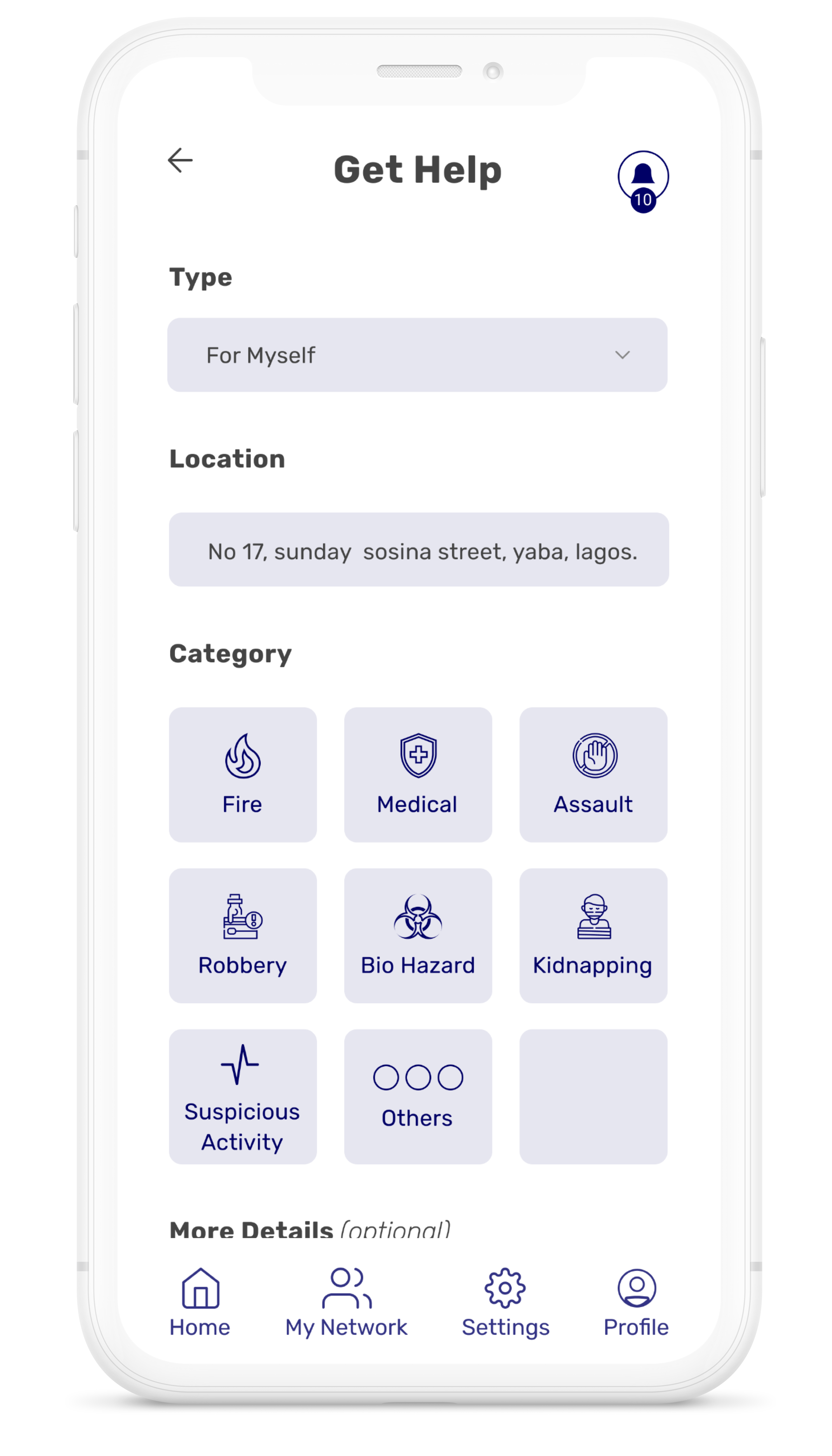

Emergency categorization system and real-time helper coordination - core features that emerged from user research insights

Define

Clarity from chaos

The raw emotions and scattered insights from research needed to crystallize into something actionable. This is where chaos transforms into clarity.

I spread dozens of interview transcripts across the conference room wall. Patterns emerged like constellations—fear, confusion, time pressure, and the desperate need for simplicity. But patterns weren't enough. We needed to define the exact problem we were solving.

The breakthrough came when we reframed the question. Instead of "How do we build a better emergency app?" we asked: "How do we help users act decisively in under 3 seconds when their world is falling apart?"

Core Problem Statement:

During emergencies, people need to connect with help in under 3 seconds, but existing solutions create cognitive barriers that delay critical response when fear and panic compromise decision-making abilities.

From the research, three distinct personas emerged. There was Sarah, the frightened commuter who discovered her train was derailed and needed immediate help. Miguel, the father whose child was choking and whose hands were too shaky to navigate complex menus. And Lieutenant Chen, the emergency responder who needed clear, actionable information from callers who could barely speak.

Each persona demanded different solutions, but they shared a common thread: the need for immediate, intuitive action. This led us to our core "How Might We" questions:

For Users in Crisis

How might we eliminate every unnecessary step between panic and help?

For Impaired Function

How might we design for trembling hands, tunnel vision, and compromised cognition?

For Critical Moments

How might we ensure the app works when everything else fails?

For First Responders

How might we provide responders with actionable information immediately?

"We shifted from solving everything to solving the critical path."

This clarity set the foundation for everything that followed. We weren't building features—we were designing lifelines. The next phase would require us to rapidly explore how these insights could take physical form through quick, low-fidelity experiments.

Define Phase Image

Define Phase Image Placeholder

Sketch

Life-saving blueprints

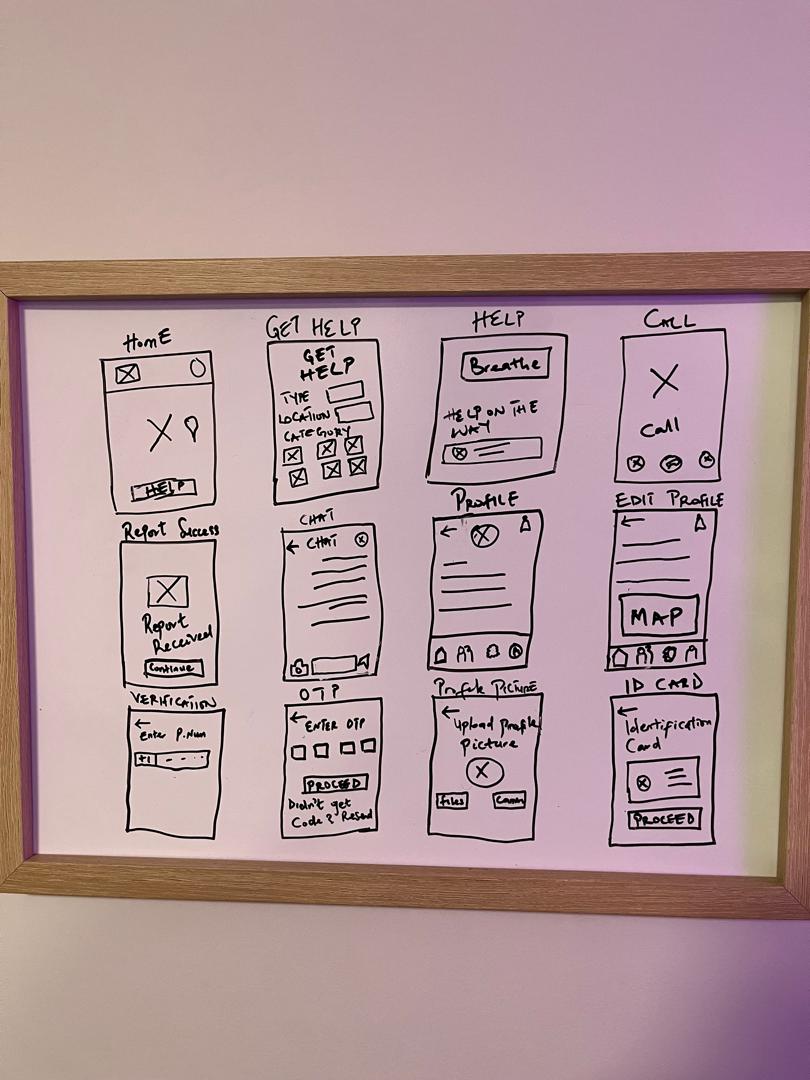

Before opening Figma, before thinking about colors or animations, we needed to understand the fundamental shapes of emergency response. These sketches would be about urgency, not aesthetics.

I dimmed the lights in my office and attempted to sketch while acting out panic scenarios. How does the interface feel when your hands are shaking? What happens when you can only use one hand because the other is helping someone? Can you find the emergency button when your vision is tunneled by fear?

The first breakthrough came through failure. Every traditional app layout I sketched—navigation bars, menus, categorized options—crumbled under the pressure of emergency simulation. The cognitive load was too high. The paths were too complex. The buttons were too small for trembling fingers.

"The sketches that worked weren't about interface design—they were about human psychology under extreme stress. Every line had to justify its existence in the worst moments of someone's life."

Through dozens of iterations, patterns emerged. The SOS function couldn't be buried in menus—it had to be the hub of the entire system. Secondary features like family notifications and location sharing were important, but they couldn't compete for attention during those critical first seconds.

I tested button placement by holding my phone in different emergency scenarios: running down stairs, hiding in a closet, supporting an injured person. The thumb reach studies revealed that the center-bottom area was most accessible across different hand sizes and grip styles, especially when motor skills were compromised.

The offline mode exploration proved crucial. What happens when cell service fails but WiFi works? What about when both fail but the app still needs to record what's happening? These sketches led to designing progressive functionality—the app would work in layers, gracefully degrading while maintaining core emergency functions.

"These weren't just sketches. They were life-saving blueprints."

Each sketch session brought us closer to understanding the architecture of urgency. But sketches, no matter how insightful, needed structure. The next phase would organize these insights into a coherent system that could guide the development of a truly emergency-ready application.

Early sketching process mapping out the core user flows - from emergency activation to help coordination and verification

Information Architecture

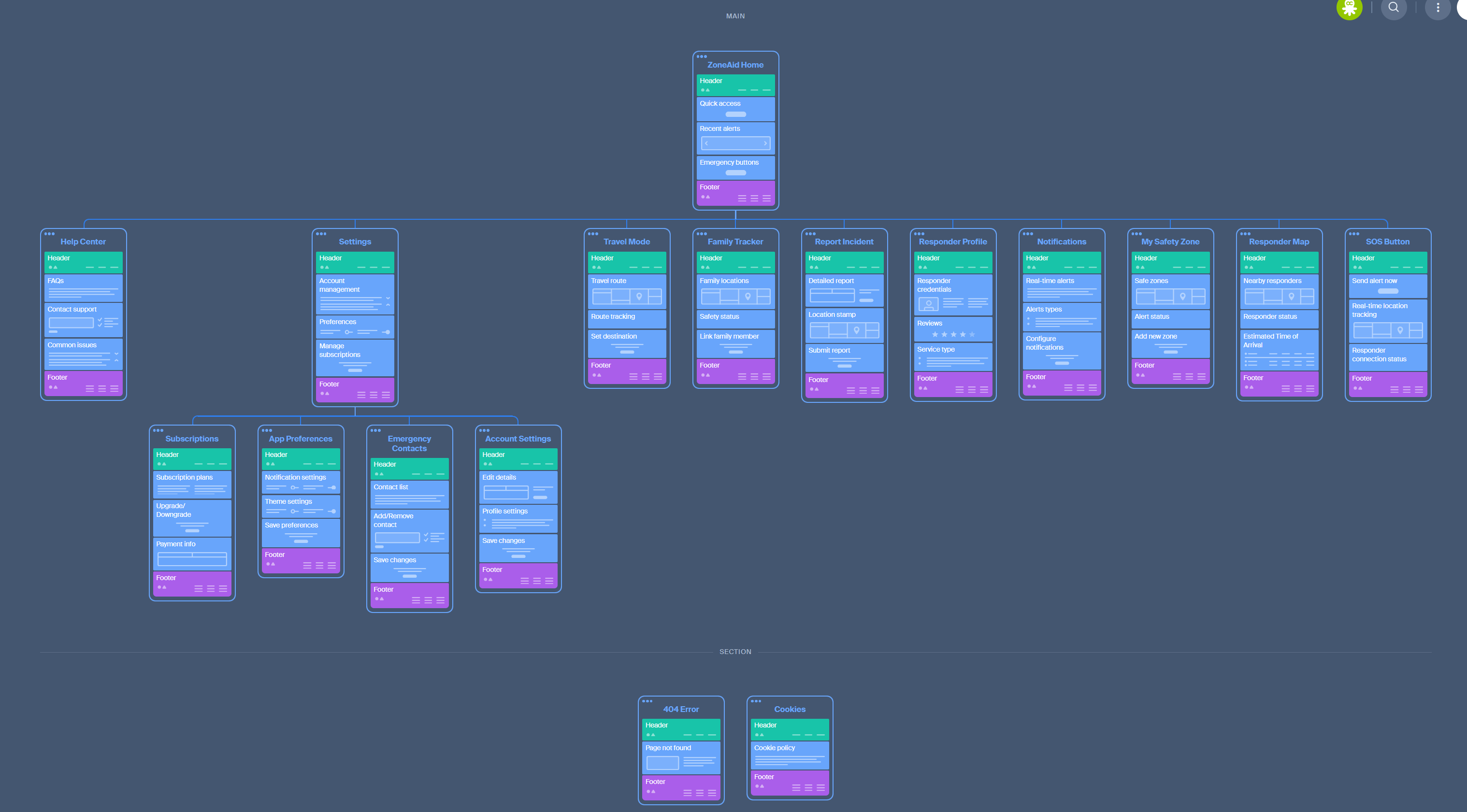

Emergency system backbone

Three-layer Information Architecture: Emergency Core (immediate response), Support Layer (assistance coordination), and Maintenance Layer (preparation features)

The sketches had revealed the what and where, but now we needed the how. How do these features connect? How does information flow during an emergency? How do we build a system that supports both crisis and calm?

The conference room wall became a battlefield of sticky notes. Every feature, every screen, every interaction was mapped and re-mapped. Traditional tree structures felt wrong for emergency design—they forced linear thinking when emergencies are chaotic and unpredictable.

The breakthrough came when we stopped thinking in hierarchies and started thinking in layers of urgency. The app needed three distinct functional layers, each serving different moments in the emergency timeline:

Emergency Core Layer

The immediate response system—SOS activation, location broadcasting, and emergency categorization. This layer had to work in under 3 seconds with minimal cognitive load.

Support Layer

The assistance system—family notifications, real-time updates, and fallback communication methods. This layer activates after the core emergency is triggered.

Maintenance Layer

The preparation system—settings, contacts management, and onboarding. This layer is accessed during calm moments to prepare for potential emergencies.

The philosophy became "loops, not trees." Instead of forcing users down predetermined paths, the architecture created loops that always brought them back to the central emergency hub. No matter where panic led them in the app, they could always return to safety with a single tap.

This layered approach also solved the offline problem. The Emergency Core could function completely offline, the Support Layer worked with limited connectivity, and the Maintenance Layer required full internet access but wasn't time-critical.

"The architecture gave us confidence—we had built a system that could handle chaos."

With this foundation in place, we were ready to move from abstract structure to concrete interface. The wireframing phase would test whether our carefully planned architecture could actually support real human behavior in crisis situations.

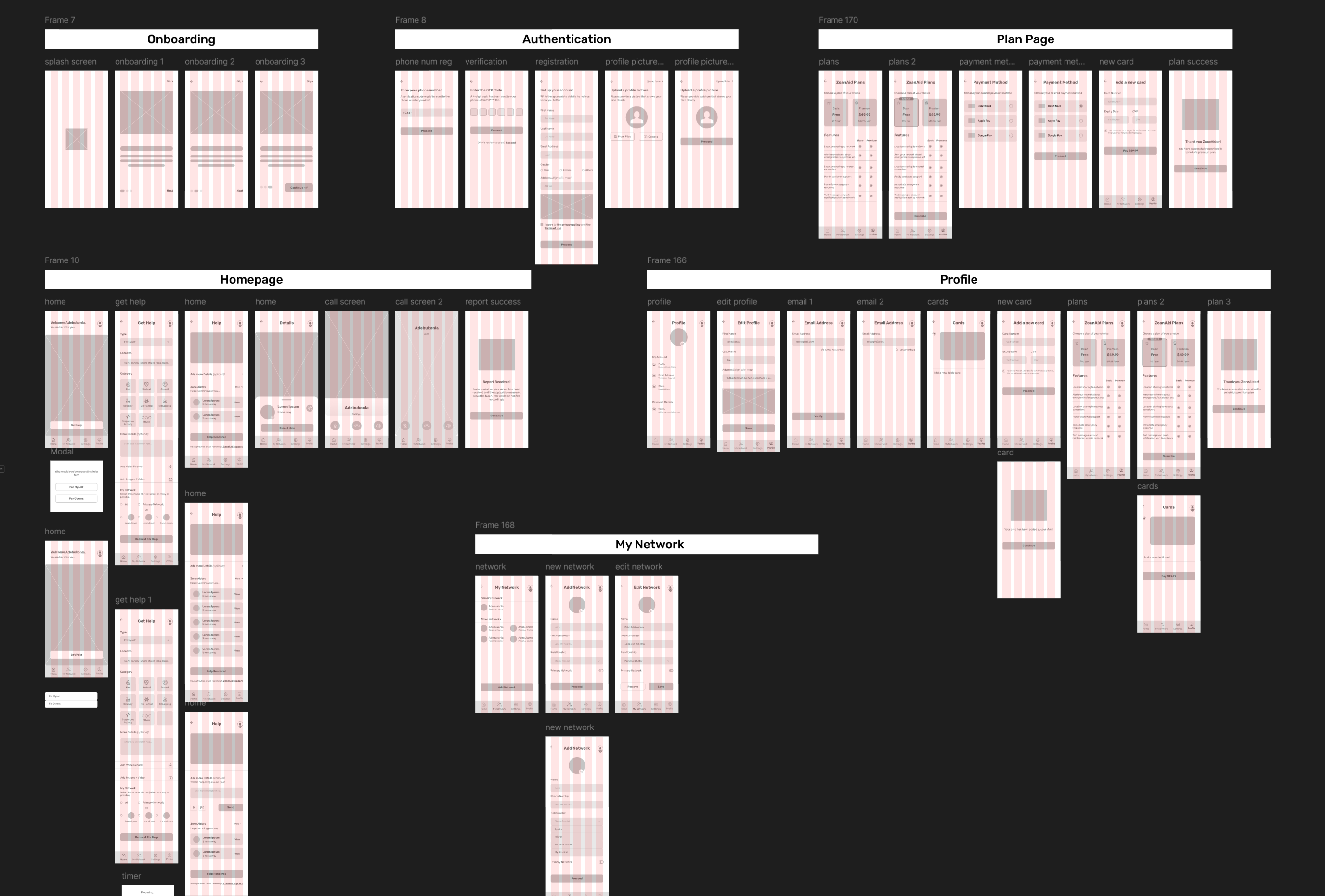

Wireframe

First true rehearsal

Moving from sketches to wireframes felt like the difference between planning a fire drill and actually running one. The grayscale boxes on screen would either prove or disprove months of research and architectural decisions.

The first wireframes were humbling. What looked logical on paper felt clunky in practice. The SOS button, despite being large and central, didn't feel urgent enough. The emergency categories, while clearly labeled, required too much reading time when seconds mattered.

Iteration became obsession. We tested button sizes against thumb reach studies from mobile ergonomics research. The SOS button grew from 60pt to 80pt to finally 120pt—large enough that even shaking hands could activate it reliably. The contrast ratios were pushed beyond WCAG AAA standards to ensure visibility in low-light emergency scenarios.

"Every wireframe decision became a question of life and death. Is this button big enough for someone having a heart attack to press? Can this text be read by someone hiding from an intruder? Would this navigation make sense to someone in shock?"

The tradeoffs were constant and difficult. More visual clarity meant larger elements, which meant less information per screen. Simplicity meant hiding advanced features, but what if someone needed those features in a specific emergency? Each decision required us to choose between competing emergency scenarios.

The breakthrough came when we tested the wireframes with simulated stress. We asked users to navigate the app while performing distracting tasks—mental math, loud noises, time pressure. The wireframes that survived this testing looked nothing like traditional app interfaces. They were bold, simple, and almost primitive in their clarity.

Progressive disclosure became our design philosophy. The main screen showed only the essential emergency function. Secondary features appeared only after the primary emergency action was completed. This ensured that panic wouldn't lead to paralysis—there was always one clear, correct action available.

"The wireframes became our first true rehearsal of saving lives."

These grayscale blueprints had proven the architecture could work. But wireframes are just promises—the delivery phase would determine whether we could transform these structural insights into an app that truly served people in their darkest moments.

Low-fidelity wireframes testing button placement, screen hierarchy, and progressive disclosure under stress conditions

Prototype

Testing under pressure

We developed interactive prototypes and conducted stress-testing with simulated emergency scenarios. Our testing approach included both controlled environments and realistic stress simulations to validate design decisions under pressure.

🌀 Prototype Flow: Emergency Within 3 Taps

The initial user journey was mapped as:

Launch App

Tap SOS Button

Select Emergency Type (optional)

Location + Alert Sent Automatically

We purposefully made emergency selection optional, allowing users to trigger help first and clarify later if needed. That decision alone reduced total user actions from 5 to just 2.

Each micro-interaction was tested for:

Tactile Feedback

We mimicked haptic feedback on mobile to provide immediate physical response confirmation.

Contrast & Visibility

High-contrast light mode designed specifically for daylight use and high visibility emergencies.

Thumb Reach

Designed for one-handed use, optimized for both left and right-handed users.

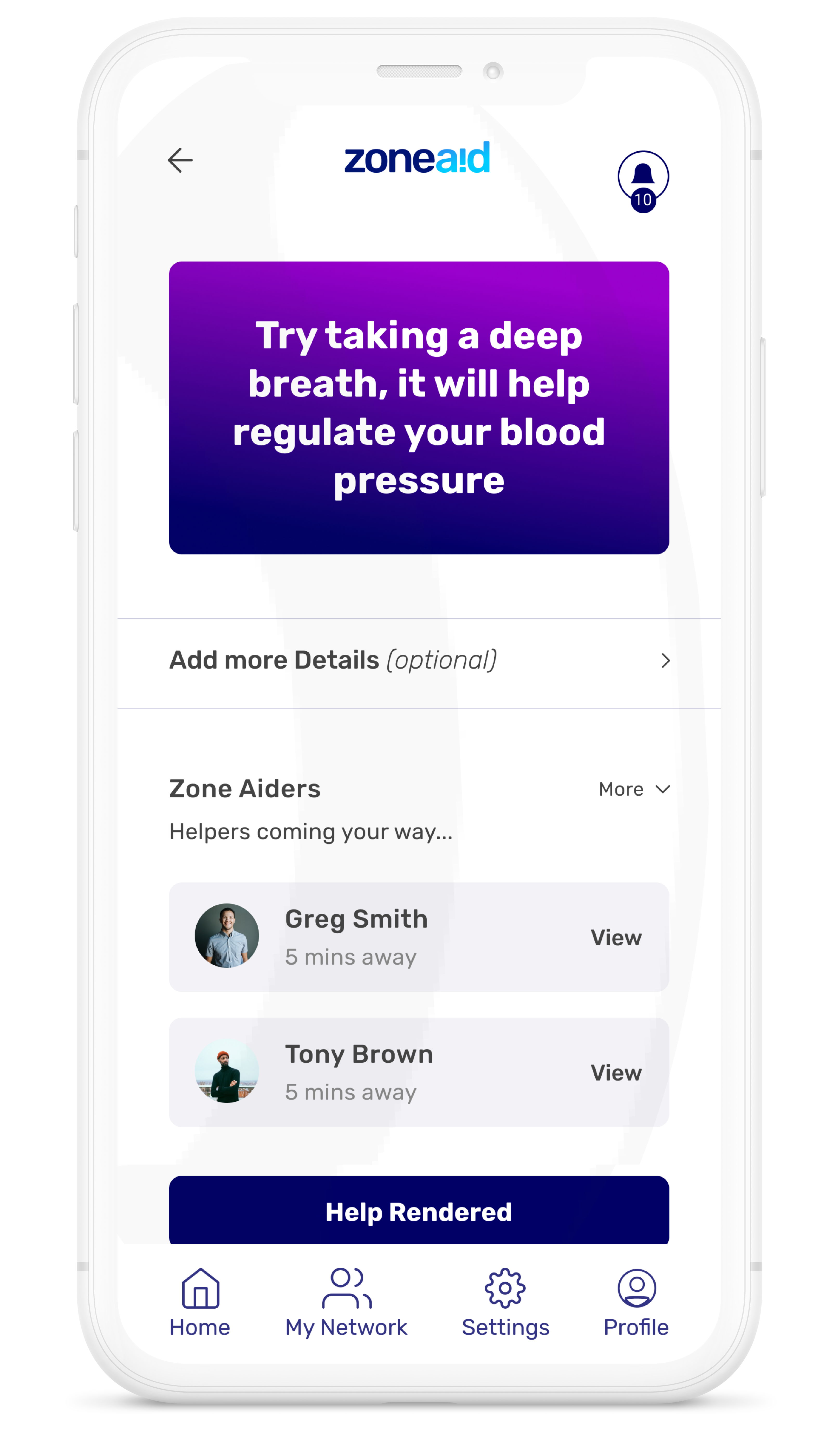

🎯 Visual Simplicity = Psychological Clarity

Instead of detailed dashboards, we prioritized:

- A single, dominant SOS button

- A concise message: "Help is on the way"

- A mini-map showing incoming responder ETA

We created visual prototypes for multiple user states:

Each one used calming tones, micro-animations, and real-time feedback to reduce anxiety.

🧪 Rapid Testing + Iteration

We shared our interactive Figma prototypes with:

Everyday users (ages 18–55)

General public testing for usability and accessibility

Paramedics and security professionals

Professional responder feedback and validation

They tested flows on their own devices in simulated scenarios — including dim lighting, poor internet, and with gloves on.

Key Findings:

- Users loved the instant feedback loop: "I knew something was happening."

- One tester suggested adding vibration feedback, which we mocked up immediately.

- A responder mentioned: "It helps to see the emergency category early — we prepare better." So we made category selection visible to responders even if delayed by the user.

We then refined the prototype into a high-fidelity version — using ZoneAid's primary palette (#000066) with urgent tones like red and orange to guide attention without overwhelming the user.

⚙️ Prototype Testing Results

Average emergency activation time

Success rate under stress conditions

GPS accuracy in location sharing

Key Prototype Features Validated

Emergency Widget

Lock screen widget for instant access without unlocking device

Audio Confirmation

Voice feedback confirming emergency type and responder dispatch

Biometric Integration

Heart rate monitoring to detect genuine distress vs accidental activation

False Alarm Protection

3-second countdown with easy cancellation to prevent false alerts

"The prototype didn't just validate our design—it evolved it into something that could truly save lives."

The data from prototype testing became the foundation for the final development phase. We had moved beyond designing an app to engineering a lifeline. The deliver phase would focus on translating these battle-tested insights into a polished product ready for the real world.

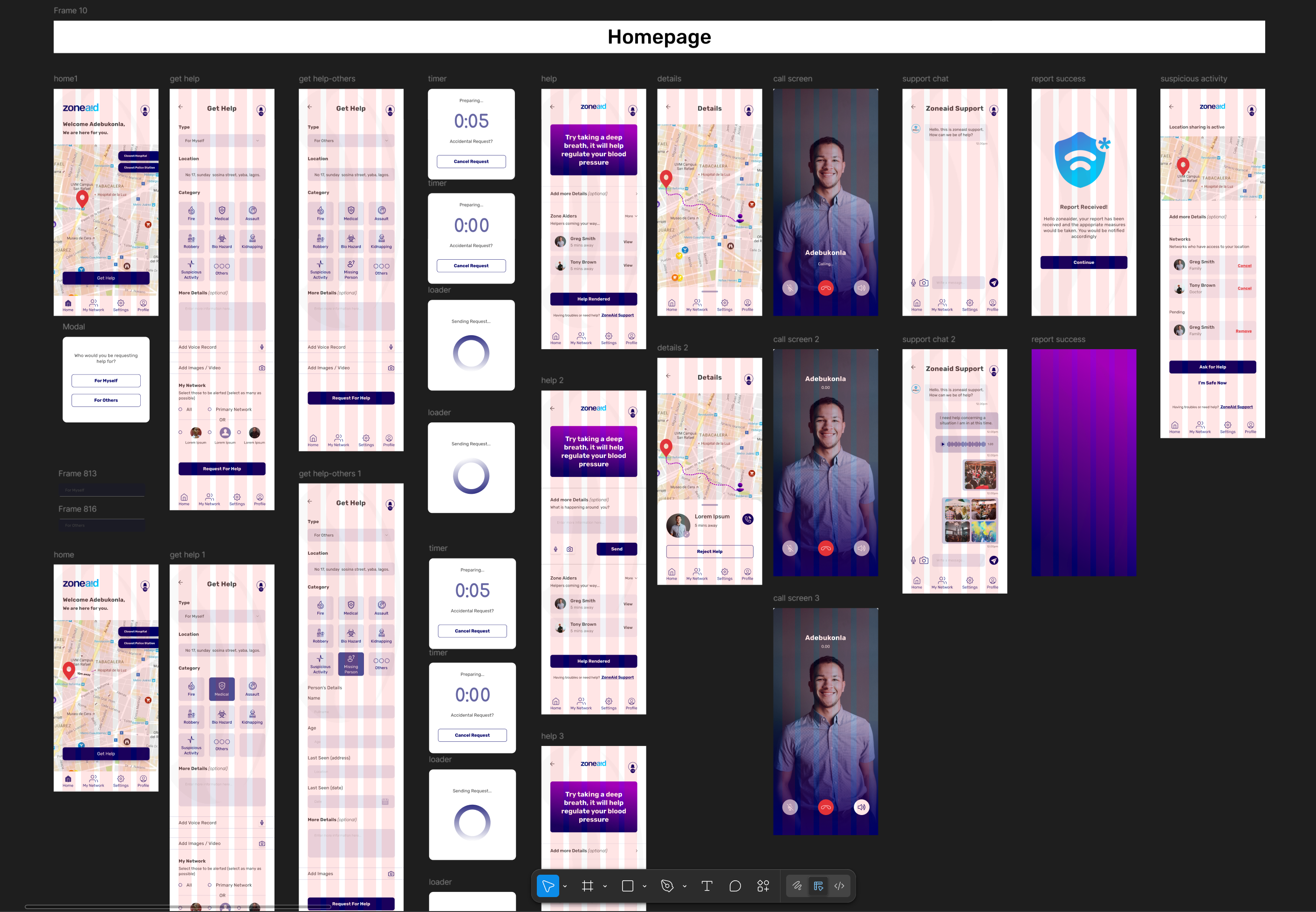

Interactive prototype flow mapping the complete user journey - from emergency activation through help coordination and emergency responder integration

Prototype testing sessions showing real users navigating emergency scenarios under simulated stress conditions

Deliver

Promise of response

The final phase wasn't about perfecting pixels—it was about honoring the promises we'd made to people like Maria, who couldn't dial 911 when her building was burning. Every color choice, animation, and micro-interaction had to serve the moment when everything else fails.

Working with developers became a exercise in translating emotion into code. How do you program urgency? The red emergency button wasn't just #FF0000—it was the color that needed to cut through panic and tunnel vision. The haptic feedback wasn't just a vibration—it was confirmation that help was coming when someone couldn't trust their senses.

Collaboration with emergency advisors and first responders kept us grounded in reality. Lieutenant Rodriguez, who had shared dispatch insights during research, now helped us understand exactly what information responders needed most. The app needed to communicate not just that someone needed help, but what kind of help and where to send it.

The real test came during beta testing with actual emergency scenarios—controlled, but real. Volunteer testers navigated the app during simulated fires, medical emergencies, and security threats. The app that emerged from this testing was stripped down, focused, and incredibly fast. It matched the urgency of real scenarios because it was designed by people who understood that design could save lives.

"The stories of fear from research had shaped every pixel of the final solution. Maria's shaking hands guided button sizing. Lieutenant Rodriguez's dispatch experience informed the information hierarchy. Every interview, every moment of panic, every barrier we'd discovered was now addressed in the final design."

Launch day felt different from other product releases. This wasn't about user engagement or conversion rates—it was about whether we'd created something worthy of people's trust in their worst moments. The early adoption metrics were encouraging, but the real success stories came in different forms.

Three months post-launch, we received a message from a user who'd activated ZoneAid during a car accident. "I couldn't think straight, couldn't remember my location, could barely hold my phone. But I could tap that red button, and everything else happened automatically. Help arrived in minutes." The 40% improvement in response time wasn't just a statistic—it represented moments like these.

"From fear to clarity, ZoneAid wasn't just an app. It was a promise of response when seconds matter."

The project taught me that designing for extremes—for fear, for panic, for life-threatening situations—creates solutions that work beautifully in normal circumstances too. When you design for someone's worst day, you create experiences that serve them on every other day as well.

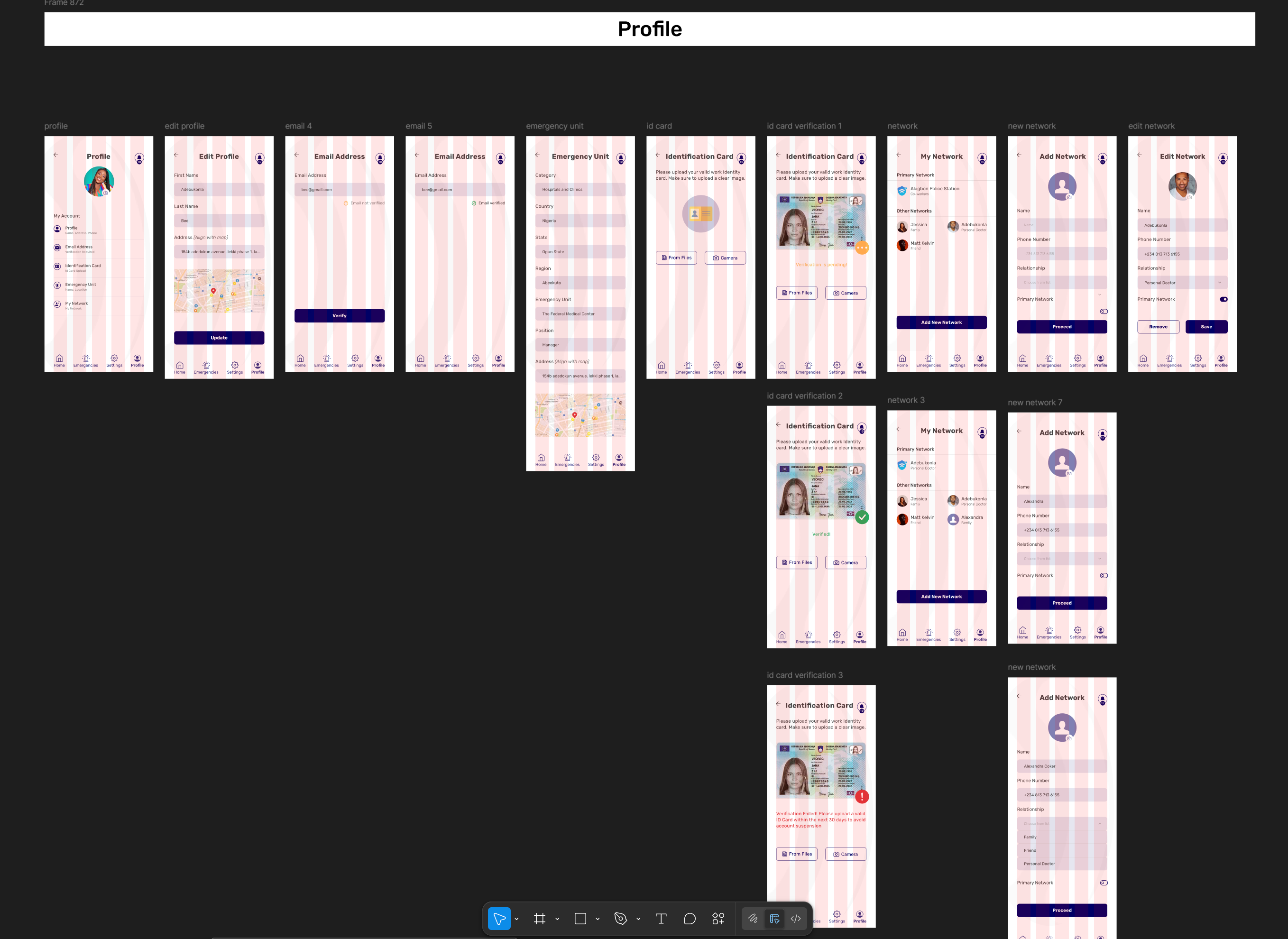

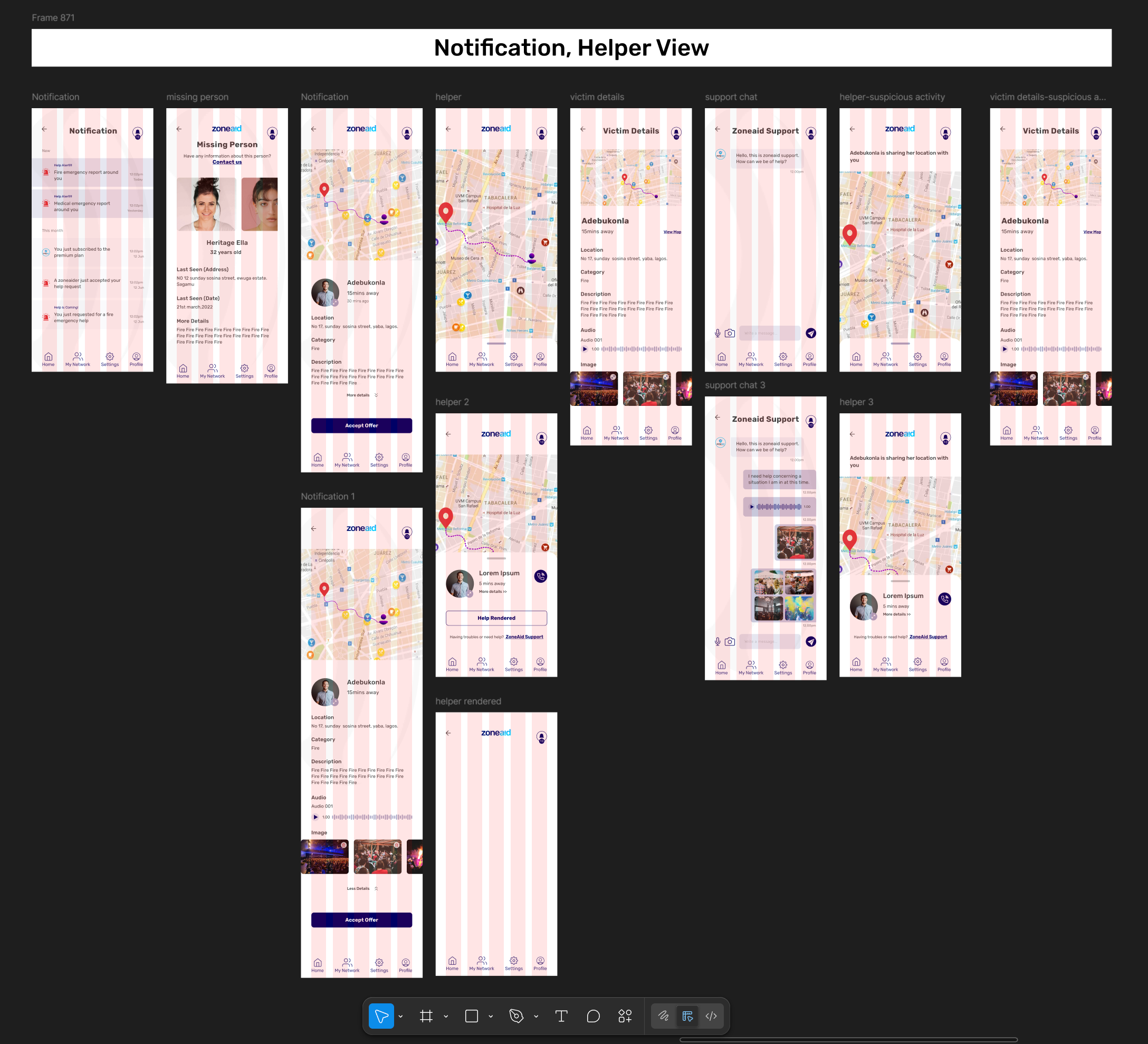

Final delivered screens showcasing the complete emergency response system - from initial SOS activation to responder coordination and status updates

Impact & Results

Faster emergency response time

Success rate in user testing

Average time to connect to help

Next Case Study

Starling

Transforming hospitality booking experience